GitGuardian for AI Agents: An AX Audit

A four-stage agent experience audit of GitGuardian — testing discoverability, onboarding, integration, and agent tooling.

How well do developer tools work with AI coding agents?

We test discoverability, documentation, onboarding, and integration quality

to measure AX across developer platforms.

A four-stage agent experience audit of GitGuardian — testing discoverability, onboarding, integration, and agent tooling.

We tested Orbital's agent experience across discoverability and integration. Four prompts including a web search failed to surface it. A quickstart guide cut integration cost by 30% and produced a more correct result.

We tested both tools, examined their benchmarks, and compared pricing, features, and agent integration. Here's what we found and which one we'd actually recommend.

A four-stage agent experience audit of Vercel, Railway, and Netlify — testing discoverability, onboarding, integration, and agent tooling.

We audited GrowthBook across discoverability, onboarding, integration, and agent tooling. The experimentation features are strong, but the CLI gaps and buried Django documentation cost it points.

I ran the same Infisical integration task with and without quickstart guides. The docs run completed fully autonomously. The vanilla run needed 30 minutes of manual UI navigation.

A four-stage product evaluation of Airweave — testing discoverability, onboarding, integration, and agent tooling for AI agents.

How Vercel, Netlify, Resend, and Mintlify approach Agent Experience, where their definitions fall short, and a framework for measuring AX properly.

A four-stage agent experience audit of Freestyle — testing discoverability, onboarding, integration, and agent tooling, with a competitor comparison.

We tested Prefect's agent experience across four stages (discoverability, onboarding, integration, and agent tooling). Here's every score and what it took to get there.

I tested DocuSign's agent experience across five stages (discoverability, onboarding, integration, MCP server, and skills). Here's every score and what it took to get there.

Comparing Sentry, Raygun, and TrackJS for application error tracking. We tested how easily an AI agent could integrate each tool into an app, to compare the service's features, documentation, and usability.

Comparing Supabase and PlanetScale for agent experience. We tested how easily Claude Code could discover, sign up for, and build a full-stack app with each database platform.

I tested three transcription APIs with different agent visibility levels to see if discoverability predicts quality. The invisible platform had the best features.

Comparing Honeycomb and SigNoz for application monitoring. We tested how easily an AI agent could integrate each tool into an app, to compare the service's features, documentation, and usability.

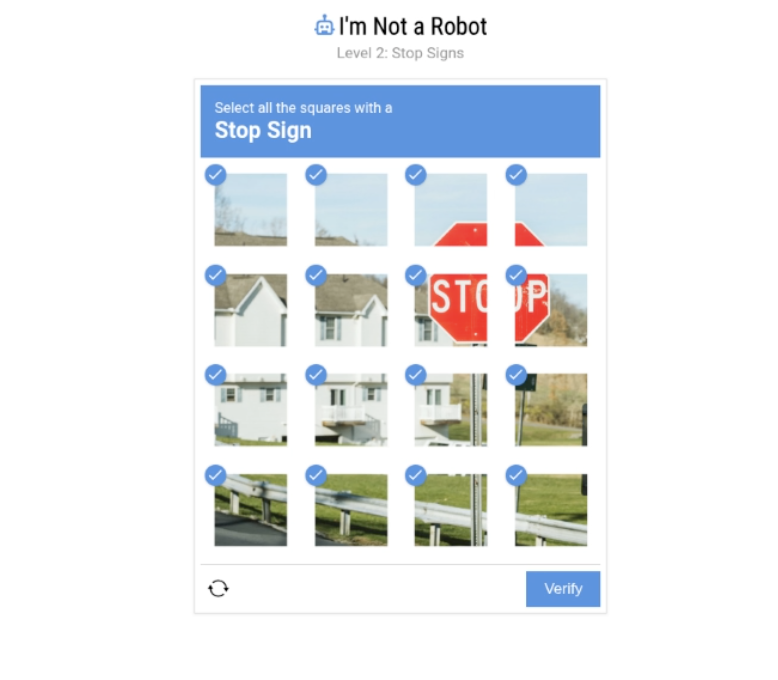

Testing three browser automation platforms through AI coding agents. Comparing discoverability, documentation quality, and real-world features like CAPTCHA solving, parallel execution, and bot detection to see if agent visibility predicts actual performance.

We're evaluating how well AI agents can discover, sign up for, onboard, and integrate with developer tools. Here's our framework for measuring Agent Experience (AX) across five key metrics.