Agent Experience follows the same pattern as previous shifts in software experience. User Experience defined how humans interact with products. Developer Experience defined how engineers build products. Agent Experience defines how AI systems use products.

AI agents promise automation with minimal human intervention. In practice, they depend on the systems we design. If those systems are not built for agents, they fail.

Consider two scenarios. An agent generates documentation using Mintlify and needs to retrieve structured content without exceeding token limits. Another agent deploys an application on Vercel and needs to verify deployment status through CI/CD pipelines. In both cases, success depends on how easily the agent can access, understand, and act on the system.

This is where Agent Experience becomes critical. It already affects whether agents succeed, fail, or abandon a task.

Several companies have started defining AX, including Vercel, Netlify, Resend, and Mintlify. Their approaches are useful, but limited. Most of them treat AX as an extension of Developer Experience, which leads to incomplete definitions and misleading metrics.

This article examines how these companies define AX, where their approaches fall short, and how AX should be defined and measured going forward.

What is Agent Experience?

Agent Experience relates to agents in the same way Developer Experience relates to developers. The difference is that agents do not read documentation or interpret intent. They follow patterns, rely on structure, and fail when systems are ambiguous.

As a result, Agent Experience became a practical concern. It now affects whether agents succeed, fail, or abandon tasks. This impacts developers who rely on agents, and companies whose products are used through them.

Several companies are shaping this space, including Vercel, Netlify, Mintlify, and Resend.

Vercel – AX as structured agent access

Vercel approaches Agent Experience through what can be described as agent resources. Their focus is on making systems readable and usable by agents through structured documentation, standardized interfaces, and direct tool access.

This approach emphasizes three components:

- machine-readable documentation, including Markdown and llms.txt formats

- direct connectivity through MCP-compatible tools

- reusable agent capabilities, often described as "skills"

These components aim to reduce friction when an agent needs to retrieve information or execute an action.

From this perspective, Vercel's AX rules focus on accessibility and structure.

Agents need to discover and retrieve information reliably. This requires structured metadata, predictable layouts, and clear tool descriptions. Documentation must be optimized for retrieval rather than reading.

Markdown becomes the default format. It reduces parsing complexity and improves token efficiency compared to HTML-heavy content.

Each product surface should expose a clear entry point for agents. This includes files such as llms.txt, as well as structured sitemaps that guide navigation and retrieval.

Vercel does not define a formal AX measurement framework. However, their AI Gateway and AI SDK telemetry expose several metrics that reflect how agents interact with their systems:

- requests by model over time

- time to first token (TTFT)

- input and output token counts

- cost per request and total spend

- P75 latency and P75 TTFT

- average tokens per request

- OpenTelemetry traces for generation and tool calls, including latency and error rates

These metrics provide visibility into usage, performance, and cost. They do not measure whether an agent successfully completes a task.

Resend – AX as agent onboarding

Resend defines Agent Experience as agent readiness, with a focus on onboarding. Their approach centers on enabling agents to start using a product immediately, without human intervention.

Resend operationalizes AX through what they call AI onboarding:

- MCP server access

- CLI tools

- documentation designed for agents

- MCP-based documentation servers

- reusable capabilities such as email skills

This setup reflects a core assumption. Agents do not contact sales or book demos. They need to execute tasks immediately.

Resend defines AX through a set of principles focused on reducing ambiguity and enabling reliable execution:

- APIs must be unambiguous and machine-parsable, with no hidden behavior, implicit defaults, or inconsistent schemas

- Endpoints must be self-discoverable, with parameters and constraints defined through structured schemas, OpenAPI specifications, and explicit examples

- Examples must be complete and directly executable, since agents reuse them without modification

- Workflows must minimize multi-step dependencies, with single-call operations preferred over chained interactions

Resend also introduces reusable capabilities through skills. These skills encapsulate best practices, including error handling and retry logic, to improve reliability.

Resend does not define a formal AX measurement framework. Their approach implies qualitative and execution-based signals:

- ability of an agent to discover and use the API

- success in generating working code

- ability to handle errors and retry operations

- level of human intervention required

Resend focuses on onboarding and execution readiness. Their approach improves initial success, but does not fully address long-term agent performance across complex workflows.

Mintlify – AX as documentation for agents

Mintlify positions Agent Experience at the documentation layer. Their approach focuses on making APIs understandable and usable by agents through structured, machine-consumable content. Where Resend designs the API for agents, Mintlify designs the interface that explains it.

Mintlify operationalizes AX through documentation systems built for retrieval and parsing:

- structured documentation layouts

- machine-ingestible formats with predictable patterns

- content optimized for search and retrieval

- direct access to agent-friendly formats such as Markdown

This approach assumes that agents succeed when they can efficiently retrieve and interpret information.

Mintlify's AX rules are not explicitly defined, but their tooling and blog content reveal consistent principles:

- Documentation must be structured rather than narrative, since agents rely on patterns and consistent layouts

- Content must be machine-ingestible, with minimal noise and predictable formatting to reduce parsing complexity

- Documentation must be optimized for retrieval, with content organized in isolated chunks that can answer specific queries

- Naming and structure must remain consistent across pages, so agents can generalize patterns

Mintlify does not define a formal AX measurement framework. Their blog content highlights operational signals they track:

- agent traffic share, used to assess how much documentation is consumed by agents

- task completion behavior, based on whether agents find the required information and exit successfully

- token cost of documentation, measured by how expensive it is for an agent to process a page

- discoverability signals, such as how often structured entry points like llms.txt are accessed

This approach improves how agents understand systems. It does not guarantee that agents can successfully execute tasks once they leave the documentation layer.

Netlify – AX as automation and access

Netlify defines Agent Experience as the holistic experience AI agents have as users of a product. Their approach focuses on how easily automated systems interact with a platform without friction.

They operationalize AX through three dimensions:

- access, whether agents can use the product directly

- context, whether agents understand the system

- tools, whether agents can act effectively through it

This framework positions AX as a combination of accessibility, understanding, and execution.

In practice, this translates into systems designed for automation:

- API-first interfaces that allow programmatic control

- composable architectures that support orchestration

- workflows with minimal operational friction

Netlify's AX rules reflect this focus on automation and accessibility:

- Agents must be able to operate the product directly, without human gating such as manual onboarding steps

- Systems must provide explicit, machine-consumable context through structured documentation and descriptions

- Interfaces must be adapted for agents, with streamlined content instead of human-oriented layouts

- Systems must support content negotiation, where agents receive structured data and humans receive UI

Netlify evaluates AX through operational signals tied to automation:

- percentage of workflows initiated by agents

- number of steps required to go from prompt to deployment

- level of agent adoption across the platform

For example, Netlify reports that a significant number of sites are created through agent-driven workflows such as ChatGPT integrations.

This approach improves how easily agents can access and operate a system. It does not measure whether agents successfully complete tasks or recover from failure during execution.

Why Current AX Metrics Fail

Most approaches to Agent Experience rely on quantitative signals such as API call volume, endpoint usage, documentation readability, and tool discoverability. These metrics measure adoption. They do not measure whether agents succeed.

Based on internal testing we did using Claude and GPT, agents often fail during integration even when these metrics look strong.

An agent can call the correct endpoint, read the documentation, and select the right tool, and still fail to complete the task.

This is the limitation of DX-style measurement. It shows whether a system is used, not whether it works for agents.

As a result, most AX evaluations miss what matters:

- quality of generated code

- correctness of integrations

- ability to recover from errors

A product can appear agent-friendly and still fail in practice.

When agents succeed consistently:

- products are recommended more often

- integrations complete faster

- workflows become more reliable

Execution determines whether agents continue using a product or abandon it.

A Framework for Agent Experience

Some level of human intervention remains necessary, especially for security steps such as API key management. In practice, this represents a small portion of the workflow.

Agent Experience should be defined as how easily and effectively an agent can discover, understand, integrate, and use a product with minimal human intervention.

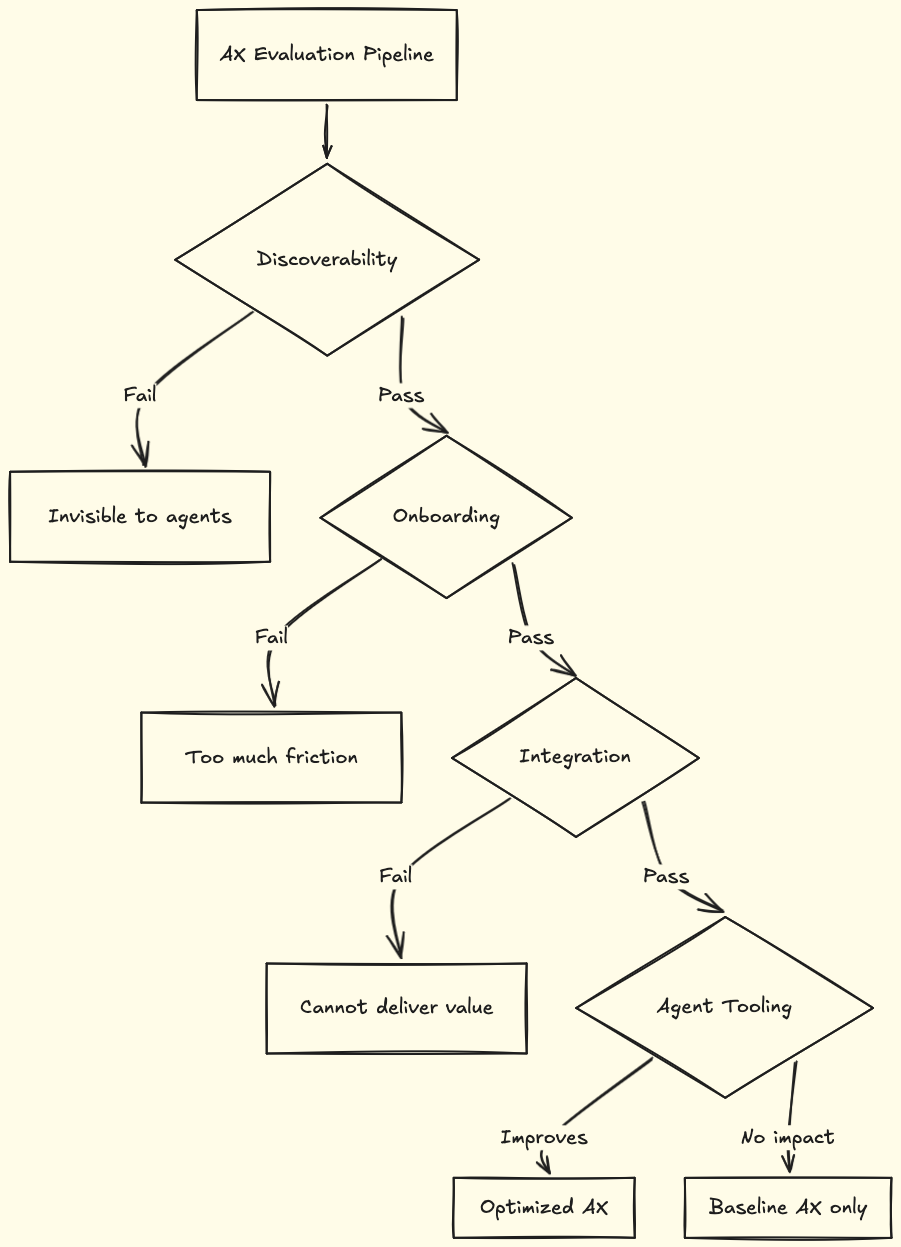

From this definition, AX should be evaluated across four stages, in order:

-

Discoverability determines whether an agent can find and select a product. The agent must know the product exists, recognize its relevance, and choose it over alternatives, with or without web search. If this step fails, the rest does not matter.

-

Onboarding measures how easily an agent can get started. The agent must be able to sign up, obtain credentials, and access a usable environment with minimal human input. In most cases, a single action such as providing an API key should be enough.

-

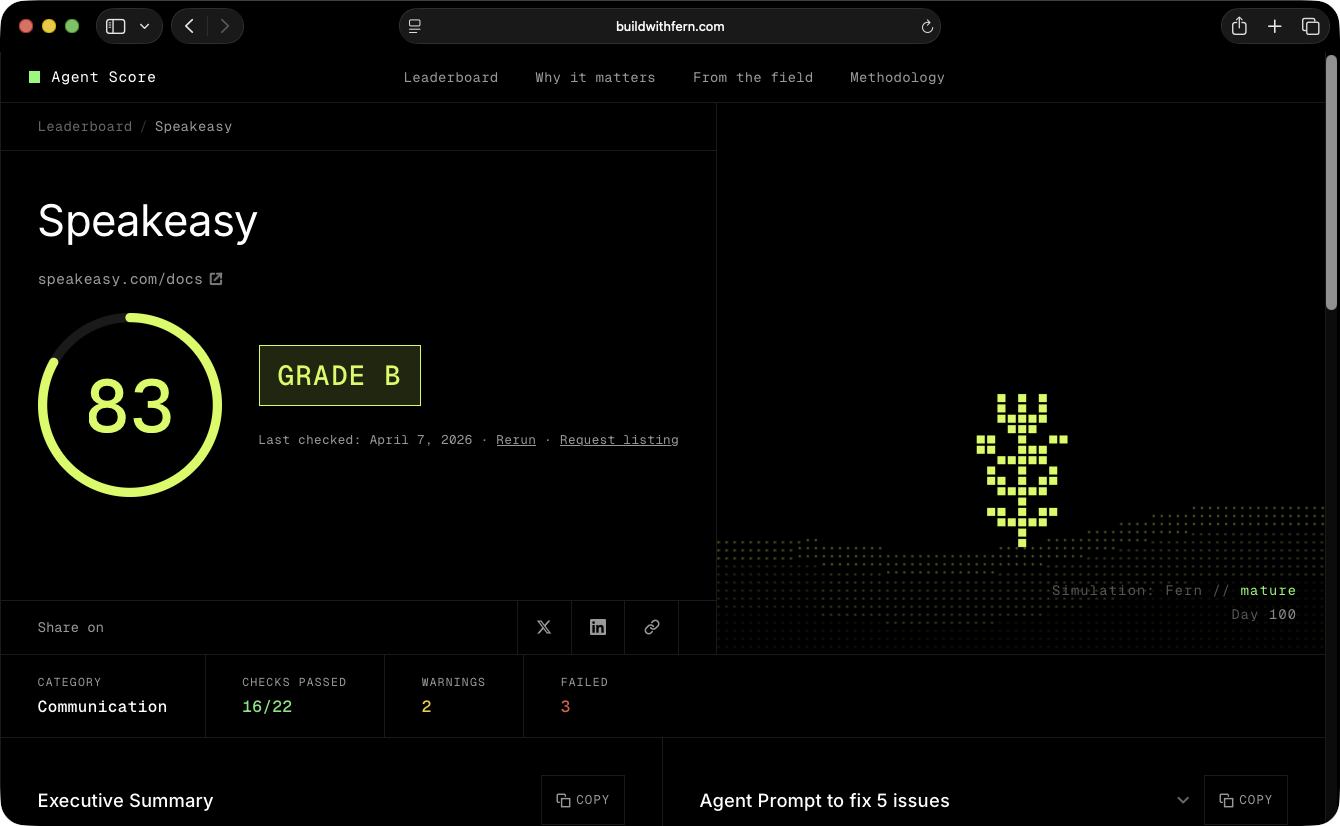

Integration evaluates whether the agent can build something functional without guidance. The agent must understand APIs, compose workflows, and handle errors. This step should be tested without agent-specific tooling such as MCP servers or skills. In our benchmarks, agents succeed more often when documentation is easily indexable and retrievable. Tools such as the Fern Agent Score provide a way to evaluate how well documentation supports this.

- Agent tooling measures how much agent-native interfaces improve performance. This includes MCP servers, structured documentation, and reusable capabilities. These tools should reduce integration time, improve code quality, and lower token usage compared to integrations without them.

We have documented this process in detail in our AX audit. Additional audits across products such as Supabase, PlanetScale, and DocuSign are available here.

Final thoughts

Most current approaches define AX in terms of accessibility. They focus on documentation, APIs, and tooling that make systems easier to use. This improves adoption, but it does not guarantee that agents succeed.

Agent Experience should be defined as how effectively an agent can discover, integrate, and execute tasks with a product over time, without human intervention.

This shift matters: agents do not behave like developers, they do not adapt to unclear systems, and they do not compensate for poor design like human developers do. When they fail, they retry, waste tokens, or abandon the task.

This is why AX must be evaluated as a sequence.

- Discoverability determines whether a product is considered

- Onboarding determines whether an agent can start

- Integration determines whether value can be delivered

- Agent tooling determines whether performance improves