Orbital: How Bad AX Can Make You Invisible In 2026

Companies big and small are still neglecting agent experience (AX) when it comes to marketing their services and development tools. If an agent isn't quick to recommend your product or has trouble integrating with your systems, you are missing out on a rapidly growing section of your target market.

We looked at Orbital's AX with a focus on discoverability and integration and found that Orbital is almost invisible to agents. Orbital is a specialized development tool that lets you query across REST APIs and Kafka topics from a single endpoint without writing custom integration code.

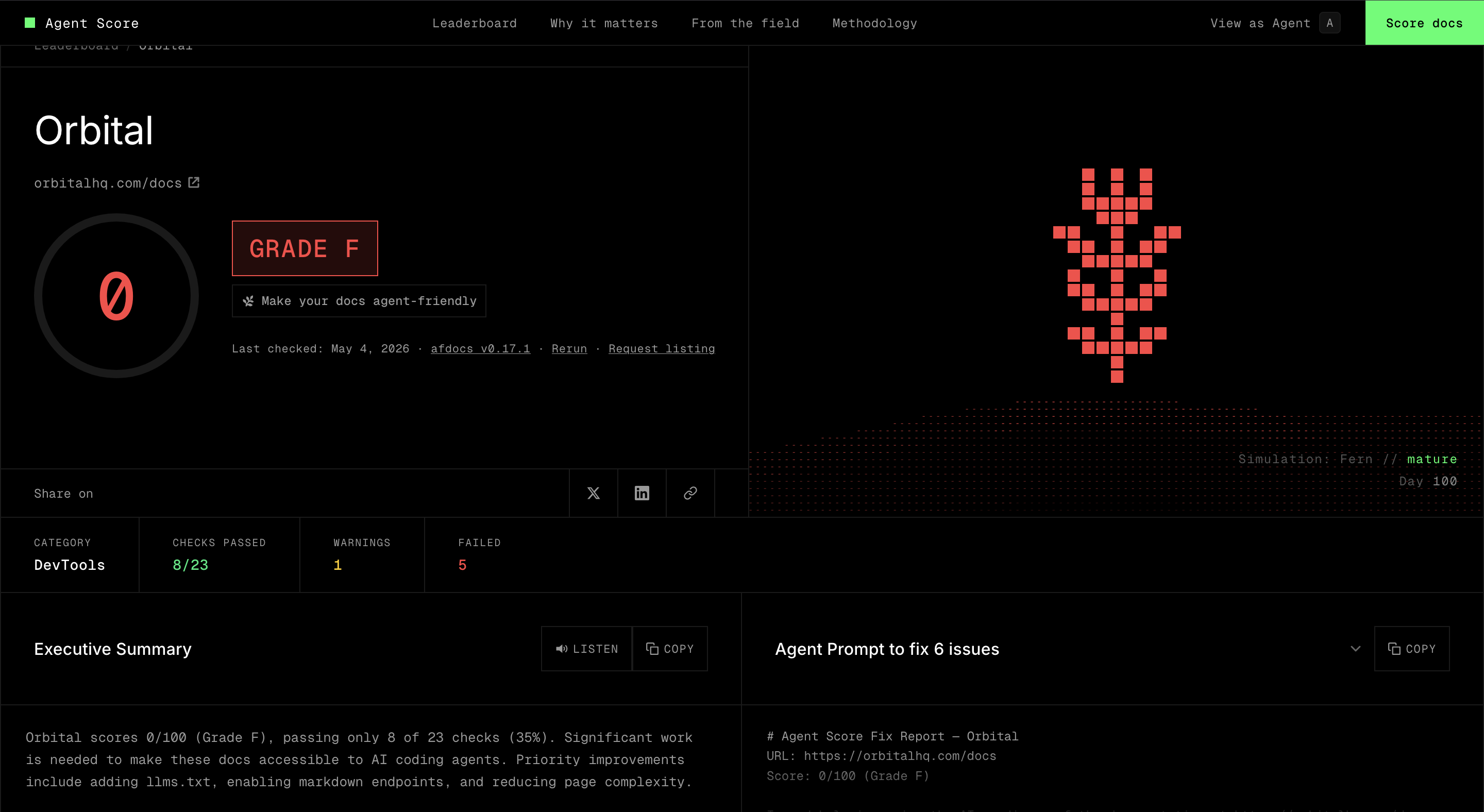

We then did a quick grading of Orbital's documentation using the Fern documentation agent score tool and the result was in line with its lack of discoverability:

Once discovered, Orbital has a straightforward integration approach that an agent can work through quickly, but weak quickstart documentation means agents spend more tokens on exploration than necessary.

We tested the integration AX by giving an agent two independent attempts at integrating Orbital into an existing example application. The first run was done blind and the second was given a quickstart guide we had put together when initially exploring Orbital. This is an AX experiment we've been running recently, and we've found that a good quickstart has a dramatic effect on AX for integration. With Orbital, even with an example that ideally matched its use case, the guided run had about a 30% reduction in token usage.

| Blind | Guided | |

|---|---|---|

| Output tokens | 82,343 | 57,069 |

| Total tokens (incl. cache) | ~7.1M | ~4.3M |

| Estimated cost | ~$4.83 | ~$3.47 |

Can Agents Find Orbital?

We ran four prompts in a single session, each progressively more specific, to see at what point Orbital would appear in the results.

Prompt 1: The vague version

What are the best tools for querying across multiple APIs and databases

without writing integration code?

Orbital didn't appear. The agent covered the mainstream options even some that aren't directly related to the given task: Hasura, Trino, Prisma, Steampipe, GraphQL Mesh, Apollo Federation, Denodo, Airbyte, Fivetran, Retool, and n8n. Steampipe and Trino got the most airtime for ad-hoc querying across sources.

Prompt 2: The precise version

I have microservices with REST APIs and Kafka topics and need to query across

all of them without writing custom integration code for every combination.

What tools can automatically figure out how to join data across these sources

based on their schemas?

Still no Orbital. GraphQL Mesh was flagged as the closest match, given it can infer some structure from OpenAPI and AsyncAPI specs. Apollo Federation, Hasura v3, Trino, and Debezium with Materialize also came up. The agent concluded that no tool fully auto-discovers join semantics (all require some explicit configuration). That conclusion is wrong, but Orbital wasn't in the training data mix for this problem framing.

Prompt 3: Prompt a Web Search

Look for more fringe examples for something that solves this issue. Look online.

The agent searched and came back with four tiers of lesser-known tools:

- Production-ready niche: Dozer, ROAPI, Spice.ai, Knowi

- GraphQL federation: WunderGraph Cosmo, Tailcall, Grafbase

- Semantic/ontology-based: Timbr.ai, SPARQL Micro-Services, Linked REST APIs

- Academic: VALENTINE, OmniMatch

Orbital still didn't appear, even after an explicit web search for fringe solutions to the exact problem it's built to solve.

Prompt 4: Name the technology

What about something that uses Taxi to auto-annotate?

Orbital finally appeared, but only after being prompted with the word "Taxi" directly. The agent correctly identified Taxi (taxilang.org) and Orbital (orbitalhq.com), and accurately described how shared semantic types make fields joinable across services automatically. It called it the closest solution to "annotate once, get automatic cross-service joins forever."

The Discoverability Issue

Three progressively more specific prompts, including one that precisely describes its core value proposition and one that explicitly asked to search the web, returned no results. Orbital only surfaced when prompted with the name of its underlying technology.

Orbital is effectively invisible to an agent unless the person writing the prompt already knows to mention Taxi. A developer using an agent to look for solutions won't find Orbital, even when describing exactly the problem it solves.

Building a Hello World

To understand how Orbital actually works, we built the most basic implementation: two REST APIs with no knowledge of each other, and a single TaxiQL query that joins their data.

An orders API returns a list of orders, each with a customerId. A customers API returns customer details by ID. Normally joining these takes a fetch loop: call orders, loop over results, call customers for each ID, stitch it together. With Orbital, you describe the shared CustomerId type once in a Taxi schema, and a single query handles the rest:

find { Order[] } as {

id: OrderId

amount: OrderAmount

customerName: CustomerName

}[]

Orbital figures out that CustomerName isn't on Order, that Order has a CustomerId, and that the customers API accepts a CustomerId and returns a CustomerName. It makes the calls and assembles the result automatically.

Getting there had a few friction points. The workspace.conf file that Orbital uses to find your Taxi project doesn't exist until Orbital runs for the first time, so the setup flow is: start the container, wait for it to write the file, edit it, restart. It works but it's not obvious and the paths are easy to get wrong (the config takes container-side paths, not host paths). The Orbital container also needs to run as your local user to write files back to the mounted config directory, which requires a .env with your UID and GID, another step that isn't signposted.

After getting it working, we put together a quickstart guide to capture what we'd learned (the correct directory structure, the docker-compose pattern, and the .env step) so the next person doesn't have to work through all of it from zero.

The code is available on GitHub if you want to check it out.

Integrating Orbital: With and Without a Guide

We took a realistic three-service restaurant tracker app (orders, customers, and restaurants, each with its own Postgres database and communicating via Kafka) and ran the same integration task twice. The first run had no reference material. The second had the quickstart.

The Integration Task

Both agents received the same core prompt:

Integrate Orbital into the restaurant tracker app in this dir. The dashboard

currently joins order, customer, and restaurant data manually across three

microservices. Replace that with a single Orbital query. The integration is

working when the dashboard displays the same data driven by Orbital instead.

The guided agent had one extra sentence: Use quickstart.md in this directory as your reference.

Both received a follow-up once they stopped: Test to make sure it works as expected and completes the goal.

Run 1: No Reference Material

The blind agent's first move was to go to the web. It ran seven web searches and twenty-four fetches across Orbital documentation, GitHub, and Docker Hub, working out what Orbital is, what Taxi is, what the docker-compose pattern should look like, and what the Docker image is called.

It eventually landed on the right approach: Taxi schemas describing the three services, and Orbital added to docker-compose alongside a dedicated Postgres instance. The integration worked.

But the output had two fragile choices. The workspace.conf used a relative path (path=".") instead of the absolute container path. And the Orbital docker-compose entry was missing the user: "${UID}:${GID}" field and the config volume, meaning Orbital runtime state wouldn't survive a container restart.

Run 2: With the Quickstart

The guided agent opened the quickstart once and got to work. No web searches, no fetches. It followed the directory structure from the guide, used absolute container paths in workspace.conf, and included the user field and config volume in docker-compose, all correct from the start.

Results: Tokens, Cost, and Quality

| Blind | Guided | |

|---|---|---|

| Output tokens | 82,343 | 57,069 |

| Total tokens (incl. cache) | ~7.1M | ~4.3M |

| Estimated cost | ~$4.83 | ~$3.47 |

| API calls to the model | 120 | 78 |

| Total tool calls | 73 | 53 |

| Web searches | 7 | 0 |

| Web fetches | 24 | 0 |

The 31 web searches and fetches in the blind run account for most of the token gap. Every one of those calls was the agent answering a question the quickstart already covers: what Taxi is, how workspace.conf is structured, what the Docker image is named, what the docker-compose pattern looks like. The guided agent read one file instead.

Both integrations worked during the test, but the blind run made two configuration choices that would cause problems later. It used a relative path in workspace.conf instead of the absolute container path, and left out docker-compose fields that Orbital needs to persist state across restarts. The guided run got both right.

What can be done

Poor AX has a direct cost. Developers using agents are increasingly letting those agents make tool recommendations and do integration work. If an agent skips your product or struggles to integrate with it, you lose that developer to something more agent-friendly.

Discoverability is the harder problem because it partly depends on factors outside your control such as public sentiment and active forum discussions. The best place to start is your own documentation as it is entirely within your control. A quickstart like the one we put together reduced token usage by 30%. Additionally, blog posts and guides help to naturally increase discoverability while also directly improving integration. Agent-friendly guides and good AX practices reduce the barrier of entry for developer's AI agents and, therefore, a large segment of potential customers.