Best Text-To-Speech Model in 2026: Blind Benchmark

In 2026, the text-to-speech (TTS) market is saturated. Every provider is offering a new groundbreaking model trained on a zillion hours of natural human speech in 200 different languages.

Many run marketing pages with blind tests pitting their own model against a competitor's flagship and asking which you prefer. Theirs usually sounds great. The competitor sounds like HAL 9000.

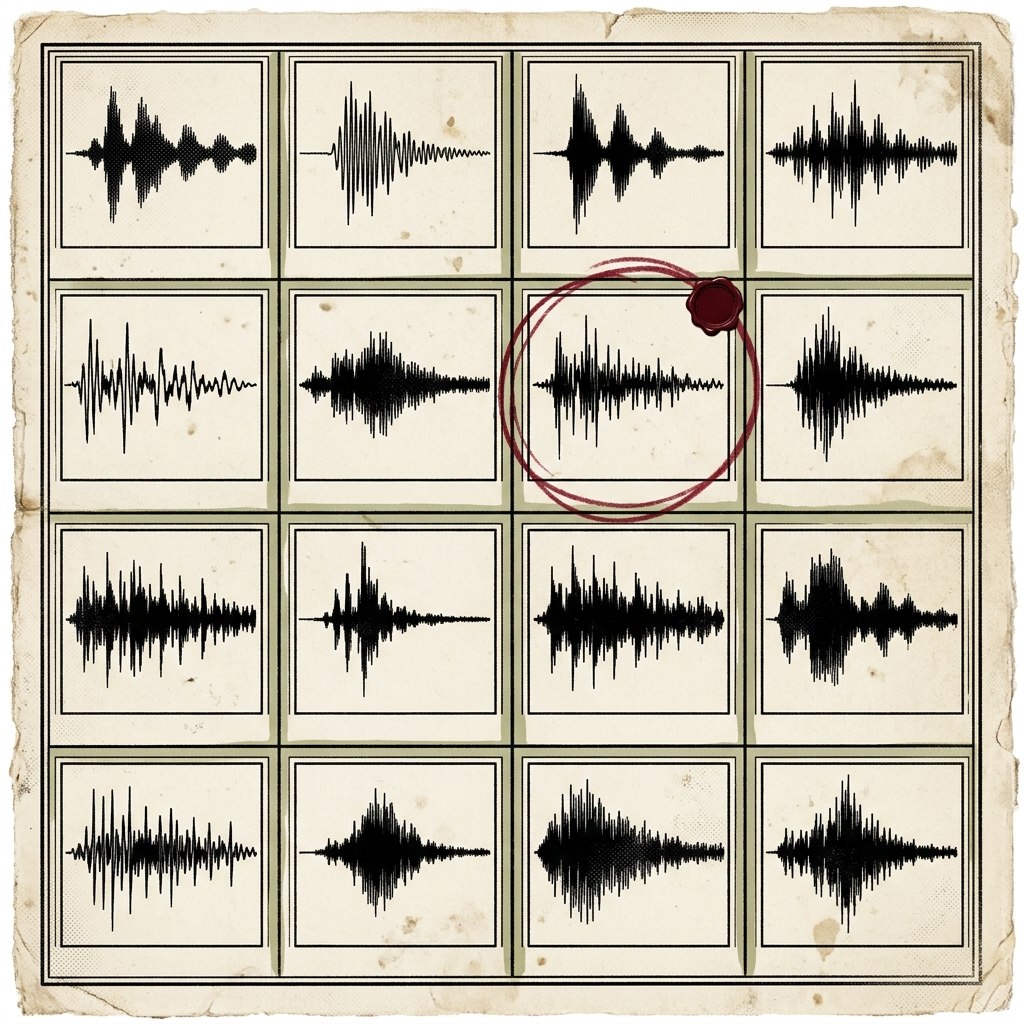

To provide an unbiased platform for a real comparison, we tested 16 different models in a blind head-to-head: ElevenLabs, OpenAI, Azure, AWS Polly, Gemini, xAI, Deepgram, Hume, Voxtral, Rime, Cartesia, Groq Orpheus, LMNT, Inworld, Smallest, and UnrealSpeech.

Emotive scenarios illustrate the difference in model quality more clearly than neutral ones. To test general quality, we put together three scripts: two dialogs using two distinct voices each, and one audiobook narration with a single voice.

Across all three tests, xAI and Gemini were a considerable step up from the competition. xAI came out on top again when we tested code-switching with a foreign accent.

In our final test, we explored expressive annotations: inline tags some providers support for more fine-grained control over how the models deliver emotion. Groq Orpheus took a surprise win here, with xAI and Gemini close behind.

In the rest of this piece, you'll find the audio clips each provider generated along with a description of the scenario. You can take the blind tests yourself to see if your results match ours. The full code we used to generate these clips is on GitHub, so you're welcome to use it to check our fairness or create your own scenarios with your own API keys.

This article focuses on basic TTS models. If you're more interested in realtime voice agents, like the kind you'd use for a virtual assistant or answering service, check out our article on the best voice agent in 2026.

Dialog and Narration Quality

We tested three scripts covering the main registers where differences in TTS quality show up most clearly.

Pride & Prejudice: Six lines from Darcy's proposal attempt. We tried to find voices with British accents where possible to match the subject matter. Some providers make it easier to find specific accents from the info returned by their APIs than others.

The News: An AI-generated newscast designed to give the models a chance to flex their emotional range and interpretation of the subject matter. Casual speech is the hardest register to fake. Most providers either sound too polished or too robotic here.

1984: Three sentences from the opening of Nineteen Eighty-Four. Spare, foreboding, deliberately heavy. This also tests a common use case: audiobook narration.

xAI and Gemini emerged as the clear top two across all three scenarios. xAI took Pride & Prejudice and The News outright, while Gemini won the 1984 narration. Gemini tends to sound a bit overacted, like a theatre kid trying too hard to express themselves. xAI generally strikes a better balance: you get a sense of the emotion, but it's more pleasing to listen to.

This is just our opinion, and different voices might sound better to different ears. Check out the sample clips in the result bracket above, or take the blind test yourself below to see if you agree.

Code-Switching Between Languages

We also wanted to test how well the models handle language and accent variation. We wrote a script for a native French speaker who speaks in both English and French, code-switching mid-sentence without losing naturalness in either language. Some providers either lacked a French accent option or didn't support code-switching, so we reduced the bracket to the eight providers that did.

Notably, Gemini was missing from the supported models list. Gemini supports multilingual scripts where the model automatically detects the languages and swaps between them seamlessly. However, we couldn't find a way to give it a French accent, which was a requirement for this scene.

xAI came out on top again, with some models struggling to produce anything coherent. Hume made a deep run with good handling of the accent and code-switching, but it lacked the natural emotive quality that xAI does so well.

Try the blind test for yourself below.

Expressive Annotation Tags

Most TTS providers aim for natural-sounding voices by trusting the model to interpret emotion from context. A few go further and offer annotation syntax: inline tags you embed directly in the text to trigger specific sounds or delivery modes on demand.

The providers vary in how they implement annotations. ElevenLabs, Gemini, and Groq Orpheus use a bracket style: drop [laughs] or [whispers] inline and the model reacts at that point. xAI is similar for point-in-time sounds, but it uses wrapping tags for sustained effects, so a whisper becomes <whisper>text like this</whisper>. Azure takes a different approach entirely, using SSML <mstts:express-as style="whispering"> wrappers around whole sentences rather than inline triggers. You set a style for a line, and you can't drop a laugh mid-sentence.

We tested seven annotations across six providers:

- laugh

- sigh

- gasp

- whisper

- a sentence delivered sad

- the same sentence delivered excited

- an attempt at singing

The narrator announces each one before demonstrating it, so you can hear exactly whether the tag fired.

xAI was finally unseated by an unlikely winner: Groq Orpheus. Groq handled all the tags exactly as you'd expect. xAI was probably the second best but got unlucky meeting Groq in the first round. Gemini made it to the final again but has a quirky interpretation of the annotations: it deletes the surrounding narration and just performs the actions. Refining the prompt to better suit Gemini's handling of annotations would probably improve this.

Try the blind test for yourself below.

Pricing per 1M Characters

We pulled the best-available published rate for each provider's flagship voice and normalized it to USD per 1M characters. Where volume tiers, annual commits, or enterprise rates are publicly documented, we used the cheapest option. Free tiers are excluded.

xAI isn't just the quality winner. At $4.20 per million characters, it's also the cheapest provider in the field, roughly an order of magnitude below the published ElevenLabs rate. Hume and UnrealSpeech round out the low end at under $10. Cartesia, LMNT, and ElevenLabs sit at the top.

Why OpenAI and Gemini Are Charted Separately

OpenAI and Gemini both bill audio output by the token rather than the character, which makes the cost of a given workload hard to predict ahead of time. Speech rate, pauses, and prompt length all affect token consumption. We've put them in a separate chart showing the published rate per million audio output tokens, so you can compare them against each other but not directly against the providers above.

Gemini 2.5 Flash TTS is the cheapest of the three at $10 per million audio tokens, with OpenAI's gpt-4o-mini-tts close behind at $12, and Gemini Pro at $20. If you're cost-sensitive and considering OpenAI or Gemini, run a representative workload through them first so you know what you'll actually pay.

The Overall Winner

xAI is the clear winner across our tests. It took three of the four scenarios outright, finished a close second on the one it didn't win, and is also the cheapest provider in the field on published rates. That combination is rare in this space, where quality usually runs at a premium.

Gemini is the runner-up where the script demands emotional range, particularly for narration, but it tends toward overacting. Groq Orpheus is the model to pick if expressive annotations are central to your use case.

If you've taken the blind tests above, let us know whether your ears agree.