AX: Sentry vs TrackJS vs Raygun

Comparing Sentry, Raygun, and TrackJS for application error tracking. We tested how easily an AI agent could integrate each tool into an app, to compare the service's features, documentation, and usability.

How well do developer tools work with AI coding agents?

We test discoverability, documentation, onboarding, and integration quality

to measure AX across developer platforms.

Comparing Sentry, Raygun, and TrackJS for application error tracking. We tested how easily an AI agent could integrate each tool into an app, to compare the service's features, documentation, and usability.

Comparing Supabase and PlanetScale for agent experience. We tested how easily Claude Code could discover, sign up for, and build a full-stack app with each database platform.

I tested three transcription APIs with different agent visibility levels to see if discoverability predicts quality. The invisible platform had the best features.

Comparing Honeycomb and SigNoz for application monitoring. We tested how easily an AI agent could integrate each tool into an app, to compare the service's features, documentation, and usability.

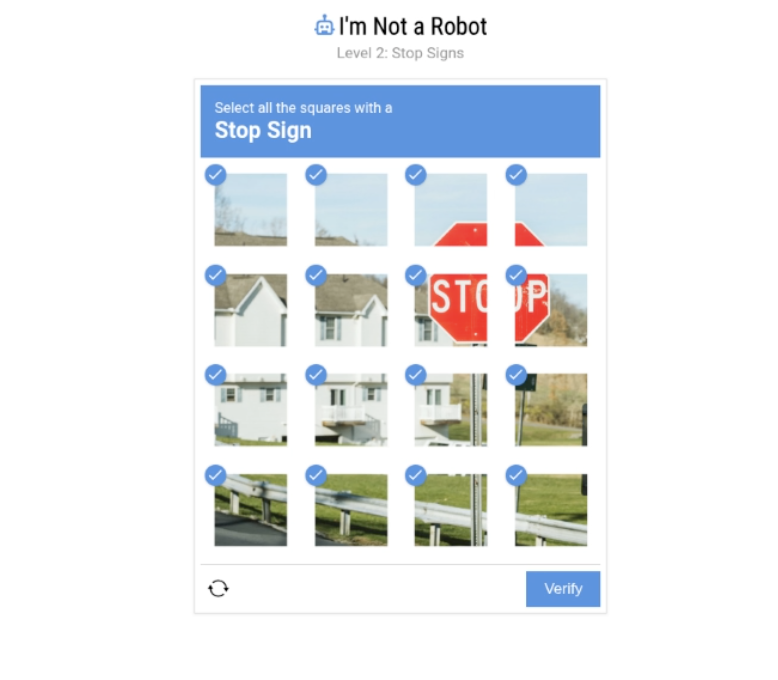

Testing three browser automation platforms through AI coding agents. Comparing discoverability, documentation quality, and real-world features like CAPTCHA solving, parallel execution, and bot detection to see if agent visibility predicts actual performance.

We're evaluating how well AI agents can discover, sign up for, onboard, and integrate with developer tools. Here's our framework for measuring Agent Experience (AX) across five key metrics.