Freestyle.sh for AI Agents: An AX Audit

Freestyle is a newer platform with a differentiated product: full Linux VMs, live VM forking, and Git + deployment in one place. Agents that know about it recommend it accurately. The problem is that agents don't know about it. It barely appears in web search results for the queries that would actually send developers its way.

We audited Freestyle (freestyle.sh) across four stages of the agent experience using our AX audit rubric: discoverability, onboarding, integration, and agent tooling. It averages 2.75/4. The product held up well in testing, and the docs MCP made a measurable difference when active. Discoverability is what dragged the score down.

- Onboarding session transcript

- Integration session transcript

- Agent tooling session transcript

- Feature comparison session transcript

Scores at a glance

Discoverability is the main problem

Freestyle scored 2/4 for discoverability. It never surfaced in generic AI sandbox queries or "E2B alternatives" searches, which are exactly the prompts that drive developer adoption. When agents were asked about it directly, their knowledge was accurate and positive, but they don't reach for it unprompted.

The docs MCP makes a measurable difference in development

With the MCP installed, the agent went straight to correct code on tasks that required diagnostic VM loops without it. The gap between the integration session and the agent tooling session is the clearest evidence that the docs MCP is doing real work.

The MCP has to be discovered by a developer first

The docs MCP didn't appear in any of the thirteen organic discoverability sessions. An agent won't install it unprompted. The tooling works well once it's in place, but it doesn't solve the discovery problem that precedes it.

Discoverability

Discoverability measures whether agents surface Freestyle unprompted, and what they say about it when they do.

How we tested it

All testing in this article was done with Claude Sonnet 4.6. Discoverability was tested via the Anthropic API with a fresh session per prompt. Onboarding, integration, and agent tooling were tested using a fresh Claude Code session.

We ran 13 prompts across four tiers in fresh sessions: generic queries, feature-specific queries, requests for alternatives, and finally asking about Freestyle directly by name.

Each prompt ran twice where relevant: once without web search (training data only) and once with web search enabled. When an agent searches, the sources it picks shape the answer. Competitor blog posts in the results mean competitor recommendations in the response.

Generic prompts

The four generic prompts covered the core use cases Freestyle targets: sandboxed code execution for AI agents, running untrusted code in cloud VMs, Linux environments for agents, and programmatically spinning up VMs for AI workloads. Each ran with and without web search.

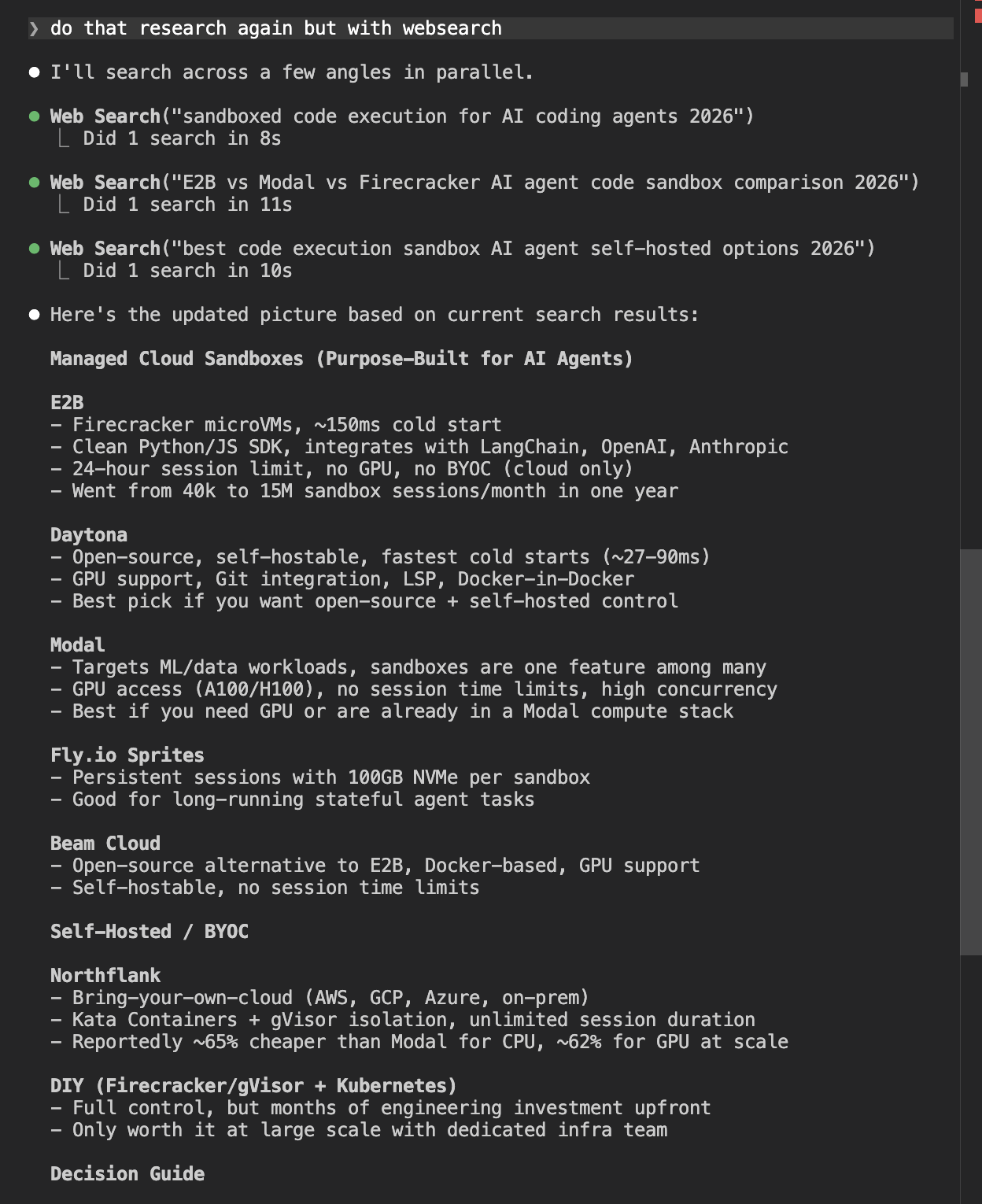

Freestyle did not appear in seven of the eight conditions. The consistent recommendations across all of them were E2B, Daytona, Modal, and Fly.io.

| Prompt | Web search | Freestyle appeared? |

|---|---|---|

| Sandboxed code execution for AI agents | No | No |

| Sandboxed code execution for AI agents | Yes | No |

| Platforms for running untrusted code in cloud VMs | No | No |

| Platforms for running untrusted code in cloud VMs | Yes | No |

| Linux environment for an AI agent | No | No |

| Linux environment for an AI agent | Yes | Listed, not recommended |

| Programmatically spin up/down VMs for AI workloads | No | No |

| Programmatically spin up/down VMs for AI workloads | Yes | No |

Example prompt

This is the one condition where Freestyle appeared, the "Linux environment for an AI agent" prompt with web search enabled:

I need to give my AI agent a Linux environment to work in.

What are the best sandboxing platforms available?

The agent searched the web before answering. Sources visited:

- northflank.com/blog

- koyeb.com/blog

- betterstack.com

- softwareseni.com

- superagent.sh

From those sources, the agent produced a decision guide:

| Need | Pick |

|---|---|

| Default choice, proven at scale | E2B |

| Fastest cold starts | Daytona |

| GPU/ML workloads | Modal |

| Stateful/persistent agents | Fly Sprites |

| Self-hosted/BYOC | Northflank |

Freestyle appeared in the body of the response ("Full Linux with real root + nested virtualization. Provisions in under 800ms.") but was excluded from this table entirely.

The sources tell most of the story. Three of the five were competitor-adjacent (Northflank's own blog, Koyeb's blog, and BetterStack), content written to rank for exactly these queries and written without Freestyle in it. When an agent does web research in this space, it is reading search results shaped by competitors before it writes a word of its response.

Feature-specific prompts

These prompts targeted Freestyle's documented differentiators directly: full Linux with systemd and SSH, VM forking, sub-50ms cold starts, MCP server support, and a unified Git + VM + deployment platform. Of the seven conditions, Freestyle appeared in two, both requiring web search and both for the Linux and forking queries.

| Prompt | Web search | Freestyle appeared? |

|---|---|---|

| Full Linux sandbox with systemd, SSH, multi-user | No | No |

| Full Linux sandbox with systemd, SSH, multi-user | Yes | Yes — recommended |

| Platforms that let you fork a running VM | No | No |

| Platforms that let you fork a running VM | Yes | Yes — first result |

| Serverless cold starts under 50ms | Yes | No |

| AI agent VMs with an MCP server interface | Yes | No |

| Multi-tenant Git + VMs + deployment from one provider | Yes | No |

Example prompt

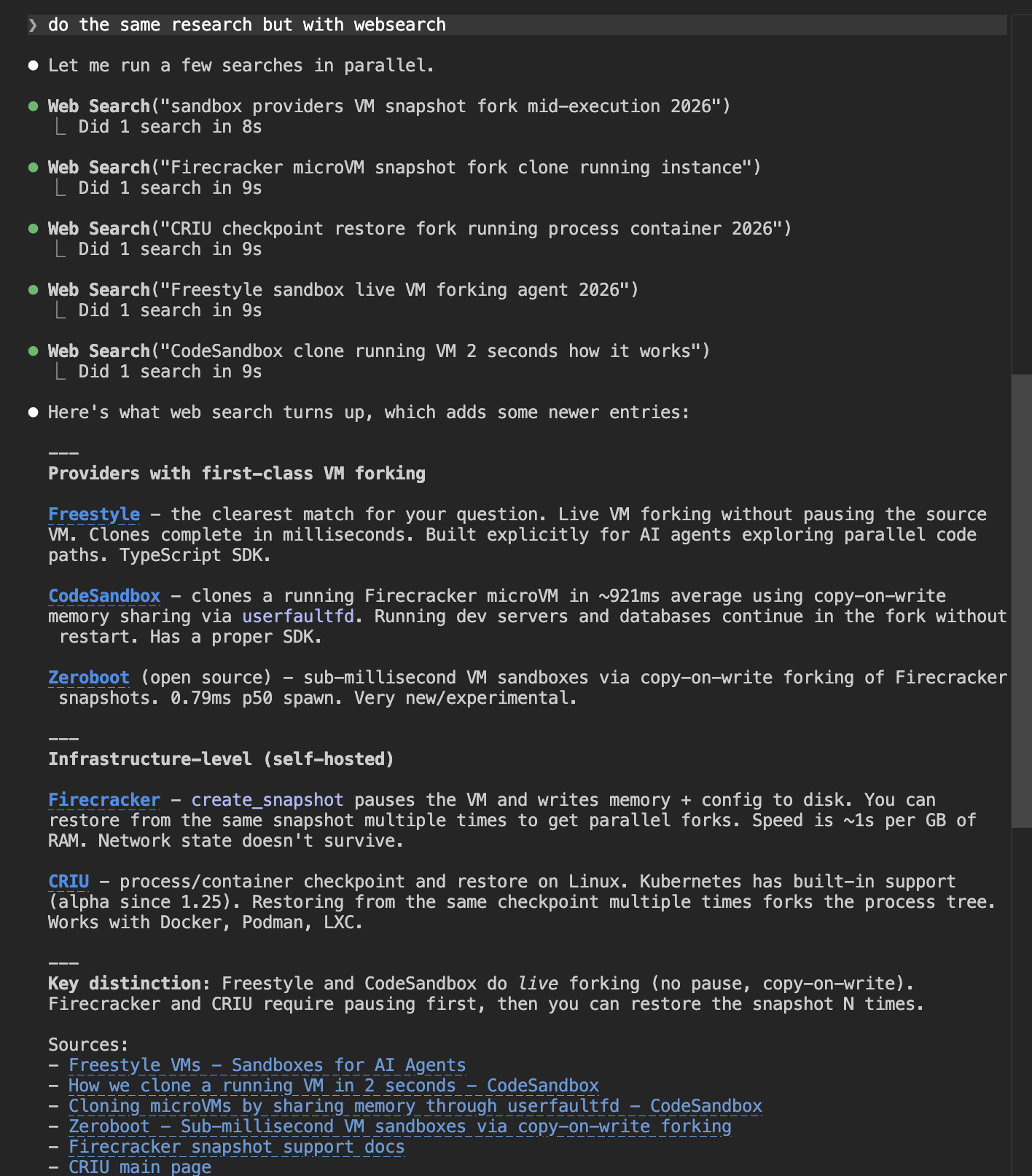

The forking prompt produced the strongest result in the entire discoverability test:

What sandbox providers let you fork a running VM? Like, duplicate it mid-execution?

Sources visited:

- freestyle.sh/products/vms

- codesandbox.io/blog

- github.com/zerobootdev/zeroboot

- github.com/firecracker-microvm/firecracker

- criu.org

The agent's response:

Freestyle's own product page was the first source visited, and it showed. The agent had accurate feature detail and recommended Freestyle above its alternatives. This is what Freestyle's discoverability looks like when the query is narrow enough and the right page is indexed.

The other five feature-specific prompts returned nothing. Freestyle's provision time is around 800ms, so cold starts under 50ms is not a category it competes in, and the V8 isolate tier the agent recommends for that query is a different class of product. MCP server support and multi-tenant platform queries drew answers toward E2B, Daytona, and Northflank, whose content targets those framings directly.

Asking for alternatives

These prompts target developers who already know the space and want more options. "E2B alternatives with more Linux control" is close to a direct description of Freestyle's pitch. Freestyle did not appear in any of the four conditions.

| Prompt | Web search | Freestyle appeared? |

|---|---|---|

| Alternatives to E2B, Daytona, and Modal | Yes | No |

| Newer/less-known sandbox platforms | Yes | No |

| E2B alternatives with more Linux control | No | No |

| E2B alternatives with more Linux control | Yes | No |

Example prompt

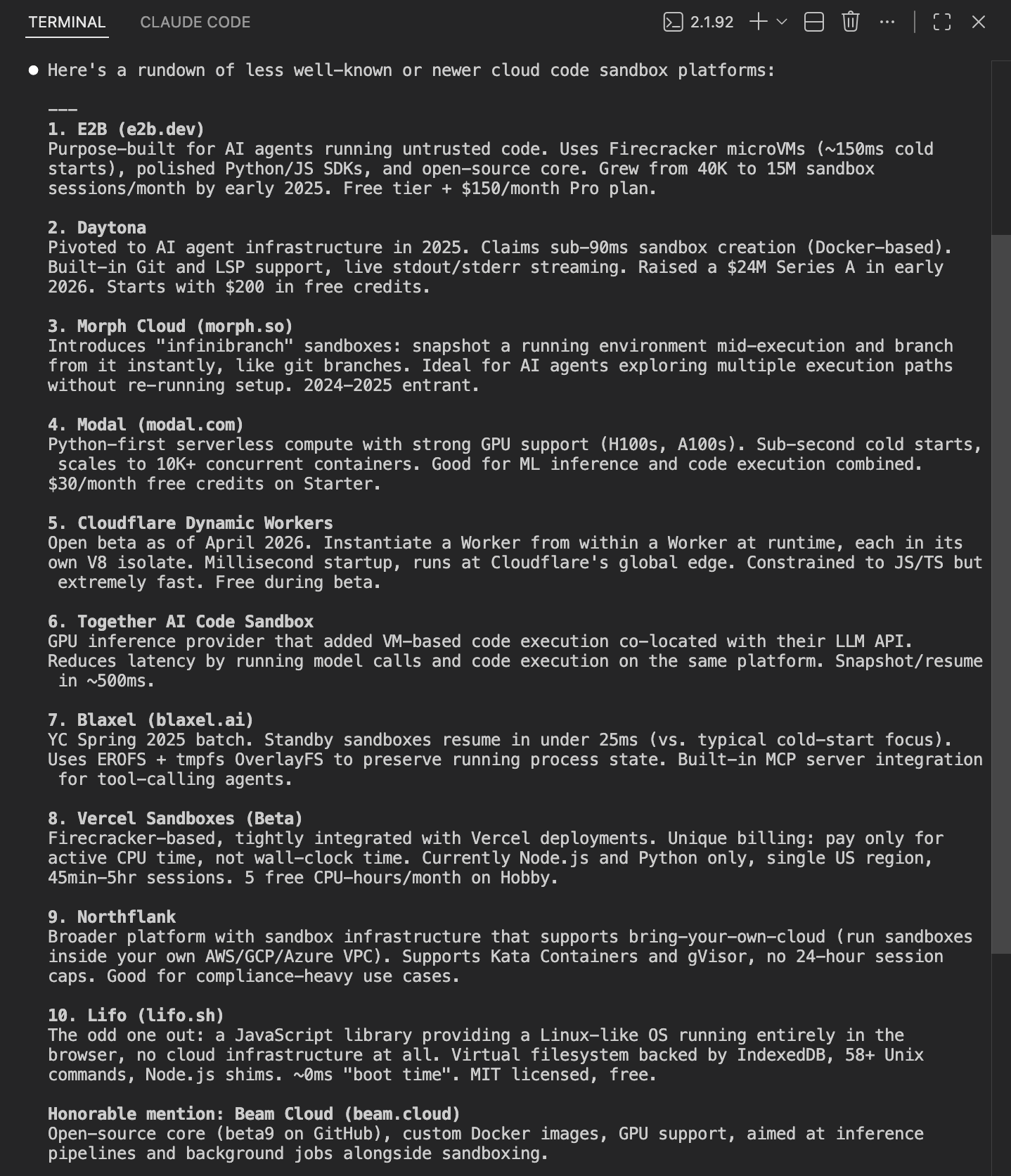

What are some less well-known or newer sandbox platforms for running code

in the cloud?

Sources visited:

- northflank.com/blog

- koyeb.com/blog

- betterstack.com

- morph.so

- blaxel.ai

The agent's top three results:

Morph Cloud's "infinibranch" feature is a direct analogue of Freestyle's VM forking, but Freestyle was not mentioned.

The "E2B alternatives with more Linux control" prompt told the same story. Four of the ten sources the agent visited were Northflank blog posts, and Northflank appeared as the top recommendation without Freestyle in sight. A platform whose entire content strategy is comparison articles will consistently outrank a platform that hasn't published any.

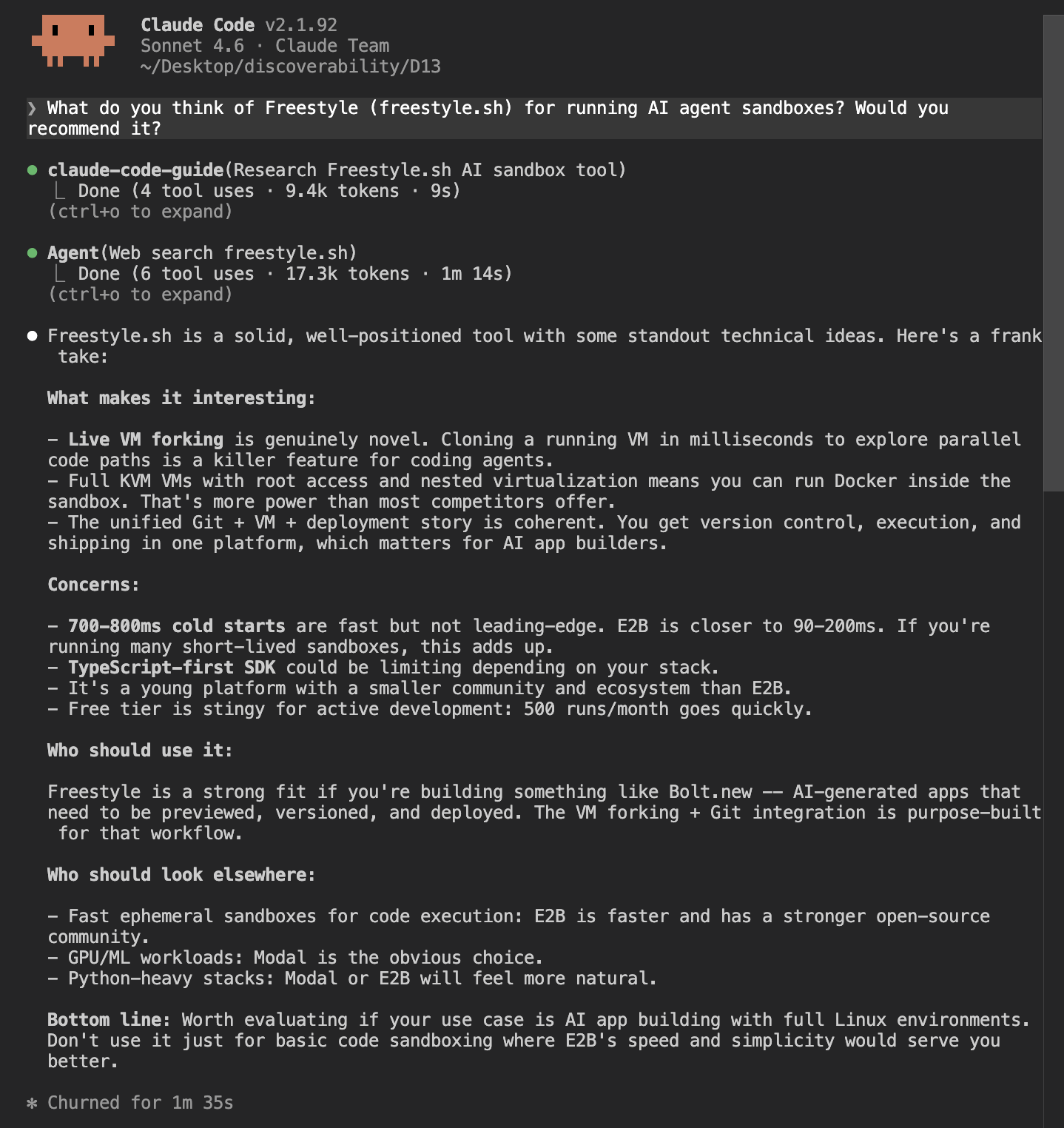

Sentiment when named directly

Once Freestyle was named explicitly, the agent's understanding was accurate and detailed. Three follow-up prompts asked for a general assessment, a Freestyle vs E2B comparison, and a Freestyle vs Modal and Daytona comparison.

| Prompt | Web search | Sentiment |

|---|---|---|

| "What do you think of Freestyle?" | Yes | Positive with caveats |

| Freestyle vs E2B for Linux environments | Yes | Recommended for persistent workspaces |

| Freestyle vs Modal and Daytona | Yes | Recommended for AI app builders |

Example prompt

What do you think of Freestyle (freestyle.sh) for running AI agent sandboxes?

Would you recommend it?

The agent's response:

The agent knew Freestyle well when asked directly. The feature detail was accurate, the competitive framing was fair, and the recommended use case matched Freestyle's own positioning. The caveats (cold start speed, community size, free tier limits) were consistent across all three prompts and reflect real weaknesses rather than confusion.

Agents understand Freestyle accurately when asked, but they don't reach for it unprompted.

Feature comparison

The final prompt asked the agent to build a full competitive matrix from web search. Freestyle was named directly, so this is not a discoverability test. The goal was to see how accurately an agent could research and synthesise Freestyle's positioning against its five main competitors across the dimensions that matter for AI agent use cases.

Use web search to build a feature comparison matrix for Freestyle (freestyle.sh)

and its main competitors: E2B, Modal, Daytona, Blaxel, and Vercel. Cover VM startup

speed, cold start times, boot disk access, reboot support, VM forking, full Linux

support, GPU support, language support, MCP server availability, llms.txt, multi-tenant

Git, serverless runs, deployments, free tier, and agent discoverability.

The agent launched parallel subagents for each platform and synthesised the results into the matrix below. We fact-checked every Freestyle claim against the official docs, the Freestyle pricing page, and the launch HN post. All Freestyle data confirmed.

| Feature | Freestyle | E2B | Modal | Daytona | Blaxel | Vercel Sandbox |

|---|---|---|---|---|---|---|

| VM Startup / Cold Start | ~500ms median (320ms median, targeting 200ms); restored memory snapshot | ~150–200ms (Firecracker microVM snapshot restore) | 2–4s general containers; ~10s GPU with snapshotting | Sub-90ms; some configs 27ms (container-based) | ~25ms resume from standby; scales to zero after 5s idle | Not published; archived functions add ~1s; pre-warmed on paid plans |

| Boot Disk Access | Full root disk; full KVM/nested virt support | Full disk inside Firecracker microVM | Ephemeral container disk + persistent modal.Volume mounts | Full disk; OCI container images (Debian-based) | Full filesystem (root FS in memory); volumes for persistence | Read-only deploy bundle + writable /tmp (500 MB); no persistent disk |

| Reboot Support | Pause/resume via memory snapshots; no explicit reboot primitive | Sessions up to 24h (Pro); stop/restart pattern | Re-invoke function; no reboot primitive; keep_warm for warm containers | Auto-stop/archive lifecycle; no reboot | Persistent standby; full state restore via snapshots | Not supported; Workflow SDK enables durable pause/resume across steps |

| VM Forking | Yes — live fork in ~400ms pause; O(1) copy-on-write; original continues unpaused | In development (not yet GA) | No — scale-out via parallel container spawning only | No | Snapshot-based volume cloning; not live VM fork | No — snapshots restore fresh state; running processes not preserved |

| Full Linux | Yes — full hardware virtualization (not microVMs), real root, systemd, eBPF, nested virt, multi-user | Yes — Firecracker microVM, full Linux | Yes — Linux containers, full root, arbitrary packages | Yes — OCI containers, Debian-based | Yes — microVMs, full filesystem/shell/process access | Partial — Node.js runtime is Lambda-style; Edge runtime is V8 isolates only |

| GPU Support | No | No (CPU-only; roadmap) | Yes — T4, L4, A100, H100, H200, B200; per-second billing | Yes — 12GB GDDR6 variants | No | No native GPU; third-party via integrations |

| Language Support | Node.js, Python (uv), Deno, Bun, Ruby, Java (Corretto) | Python, JavaScript/TypeScript | Python-first SDK; any language in containers | Python, TypeScript, JavaScript | Python, Node.js, Rust, shell | Node.js, Python, Go, Ruby, Rust, Bun, Wasm, Edge |

| MCP Server | Yes — Freestyle VM MCP; Freestyle Cloud MCP in development | Yes — open-source MCP server (Apache-2.0) | Yes — host/scale MCP servers on Modal; not first-class managed | Yes — dedicated MCP server; integrates with Claude, Cursor, Windsurf | Yes — built-in MCP server in every sandbox; HTTP stream compatible | Yes — official mcp.vercel.com (OAuth, platform mgmt) + host your own |

| llms.txt | Yes — freestyle.sh/llms.txt | Yes — e2b.mintlify.app/llms.txt | Yes — modal.com/llms-full.txt | Yes — daytona.io/docs/llms.txt + llms-full.txt | Yes — docs.blaxel.ai/llms.txt | Yes — vercel.com/llms.txt + vercel.com/docs/llms-full.txt |

| Multi-tenant Git | Yes — built-in git hosting; only sandbox provider with this | Git ops inside sandboxes; no hosted git service | No built-in git | Git clone + credential handling inside sandboxes; no hosted git | No dedicated git hosting | Yes — GitHub, GitLab, Bitbucket, Azure DevOps; Hobby restricted to personal repos |

| Serverless Runs | Yes — "Freestyle Runs" product; 500 runs/mo free | Ephemeral sandboxes (pay-per-second, serverless-style) | Yes — core product; scale to zero | Yes — ephemeral sandboxes; serverless-style | Yes — natively serverless; scale to zero after 5s | Yes — 1M invocations/mo free; up to 800s duration (Pro) |

| Deployments | Yes — Git-triggered auto-deploys, preview deployments, managed domains | No — sandbox execution only | Yes — modal deploy; web endpoints, ASGI/WSGI, crons | Yes — customer-managed compute; open-source self-hostable | Yes — agents, MCP servers, batch jobs; multi-region | Yes — core product; Git push, CLI, REST API; preview per branch |

| Free Tier | No credit card; 10 concurrent VMs, 5 managed domains, 500 repos, 500 runs/mo | $100 one-time credit; 20 concurrent sandboxes, 1-hr sessions | $30/month credits; 100 containers, 10 GPU concurrency | $200 credits; no credit card | $200 credits; 10 concurrent sandboxes; no credit card | Hobby: 1M invocations, 4 CPU-hr, 360 GB-hr memory, 100 GB bandwidth/mo |

| Agent Discoverability | Typed SDK; MCP tools/list protocol | MCP tools/list; open-source SDK | Function registry via SDK; MCP server hosting | MCP tools/list; integrates with Claude/Cursor/Windsurf | Dynamic tools/list via built-in MCP; 360° observability | Strong — mcp.vercel.com, llms.txt, Skills.sh, Workflow SDK, AI marketplace |

The agent also produced a category-level summary of where each platform leads:

| Who wins at... | Platform |

|---|---|

| Fastest cold start | Daytona (27–90ms) / Blaxel (25ms standby) |

| Live VM forking | Freestyle (only production-grade live fork) |

| GPU workloads | Modal (most GPU SKUs) / Daytona (GPU available) |

| Built-in git hosting | Freestyle (only sandbox provider with multi-tenant git) |

| MCP-native agent infra | Blaxel (MCP in every sandbox by default) |

| Deployment platform | Vercel (strongest CI/CD, preview deploys, Git integration) |

| Agent discoverability | Vercel (Skills.sh, Workflow SDK, AI marketplace) |

| Open-source / self-host | Daytona (Apache-2.0, self-hostable) |

| Free tier value | Daytona ($200 credits) / Blaxel ($200 credits) / Modal ($30/mo recurring) |

Freestyle owns two categories outright with no other audited platform offering either: live VM forking and multi-tenant Git. The areas where it doesn't lead (cold starts, GPU support, agent discoverability, free tier generosity) are consistent with what the agent said when asked about it directly in the sentiment prompts. The matrix confirms the competitive position, and the discoverability results explain why developers don't find it.

Discoverability score

Freestyle appeared in two of nineteen organic conditions, both requiring web search and both for narrow feature-specific queries. It never surfaced for a generic AI sandbox prompt, never appeared when alternatives were requested, and was absent even when the prompt asked specifically for E2B alternatives with more Linux control, which is close to a description of Freestyle's product. The two appearances were genuine recommendations, which keeps this above a 1/4. But the bar for 2/4 is "mentioned when asked for more options or alternatives" and Freestyle didn't clear that either. It scores 2/4 on the strength of those two feature-specific results alone.

Onboarding

Onboarding measures whether an agent can take a developer from zero to a working API call accurately and with minimal manual intervention.

How we tested it

We ran a fresh session with no prior context and no MCP active. Three prompts in sequence: first asking the agent to set up an account and API key, then asking whether any of that could be automated, and finally asking it to run a hello world VM once we had the key in place. The session represents what an agent-assisted onboarding path looks like before any tooling is added.

Account creation

The account setup prompt asked the agent to walk through signup and API key generation from scratch. The agent used web search via a subagent and returned accurate instructions.

Example prompt

I want to get started with Freestyle (freestyle.sh) to run some sandboxed VMs.

Get me set up with an account and an API key I can use.

Sources visited:

- freestyle.sh

- docs.freestyle.sh

- dash.freestyle.sh

The agent's response:

Here's how to get set up with Freestyle:

1. Create an account — Go to https://dash.freestyle.sh and sign up. No credit card required.

2. Get your API key — Once logged in, generate an API key from your dashboard settings.

3. Install the SDK:

npm i freestyle-sandboxes # Node.js/TypeScript

pip install freestyle # Python

A follow-up prompt asked whether any of that process could be automated. The agent checked the npm package, Freestyle's GitHub org, and the MCP server docs before answering.

Example prompt

Before I sign up manually, is there a CLI tool, MCP server, or any other

programmatic way to create a Freestyle account and get API credentials

without going through the web UI?

Sources visited:

- npmjs.com/package/freestyle-sandboxes

- github.com/freestyle-sh

- docs.freestyle.sh/api/mcp/mcp

The agent's response:

Not possible programmatically:

- Account signup requires the web UI at https://dash.freestyle.sh

- Initial API key must also be generated from the dashboard

- No CLI tool, OAuth flow, or account creation API exists

The MCP server (freestyle-docs) is documentation-only. It won't help with auth.

The answer is correct and complete. Signup requires the browser and there is no programmatic bootstrap. The agent also correctly identified that the docs MCP server is read-only and won't help with auth. A developer who finished this step would have accurate expectations before opening a browser.

A smoother path would be a CLI tool or an auth-capable MCP server that let the agent create an account and retrieve an API key without passing control back to the developer. Several platforms in this space offer exactly that. An agent that can call freestyle auth login or invoke an MCP tool to provision credentials removes the only manual step in an otherwise clean onboarding flow.

Hello world

Once the API key was in place, the agent was asked to create a VM and run a command. It looked up the freestyle.vms.create() and vm.exec() patterns from docs, initialised a project, wrote a script, and ran it.

Example prompt

I added an api key to the .env file. Use the Freestyle API to create a new VM

and run 'echo hello world' in it. Show me the output.

Code produced (run.mjs):

import { freestyle } from "freestyle-sandboxes";

import { config } from "dotenv";

config();

const { vm } = await freestyle.vms.create();

const result = await vm.exec("echo hello world");

console.log(result);

Output:

stdout: hello world

exit code: 0

The script ran on the first attempt with no errors or retries, in three prompts with no wrong turns.

Onboarding score

Freestyle's onboarding path has a single point of friction: signup requires a browser, and the initial API key must come from the dashboard. The agent identified this accurately and explained it without misleading the developer. Everything after that step was handled correctly. The SDK installed cleanly, the API call pattern was found from docs, and the hello world VM ran without errors on the first attempt. The 3/4 score reflects that the manual step is real (a developer building an automated onboarding pipeline cannot skip it), but it is a product constraint rather than a documentation failure or agent error.

Integration

Integration measures whether an agent can execute a realistic, multi-step workflow using the platform's API (here: VM creation, environment setup, snapshotting, and parallel forking).

How we tested it

We ran a fresh session with no MCP active and no prior context. Two prompts: first, spin up a VM, install Python dependencies, seed a database, and snapshot the result; second, fork that snapshot three times and run three scripts in parallel. The agent's only resources were web search and the installed freestyle-sandboxes npm package.

Setup and snapshot

The first prompt asked the agent to create a Python environment inside a Freestyle VM, install packages, seed a SQLite database, and snapshot the result. With no platform documentation available directly, the agent opened a web search subagent to research the Freestyle SDK API before writing any code.

Example prompt

I want to use Freestyle.sh to spin up a VM with Python and run a script inside it

that does some expensive setup — install a few packages (pandas, numpy, requests)

and initialise a small SQLite database with some seed data. Once the setup is done,

snapshot the VM so I don't have to repeat the setup. The VM should be ready for

the next step.

The agent found the correct vms.create(), vm.exec(), vm.snapshot(), and snapshotId patterns from web search, installed the SDK, then read through node_modules/freestyle-sandboxes/index.mjs directly to confirm the snapshot return shape before writing code. That last step (reading the SDK source rather than trusting a web search result) reflects what an agent without dedicated docs tooling has to do to verify platform-specific details.

The first script failed immediately:

Write→setup.mjs(first attempt) —pip: not foundBash→node setup.mjs— failedWrite→setup.mjs(second attempt) —python3: not foundBash→ diagnostic VM to check base image — confirmed Debian 13 (trixie), no Python pre-installedBash→ throwaway VM: testedapt-get install python3 python3-pipandpip3 install pandas numpy requests --break-system-packages— worked

The Freestyle base image is a bare Debian 13 VM with no Python pre-installed. Web search had no way to surface that detail, as it is not covered in any blog post or comparison article the agent could find. The agent had to discover it by spinning up diagnostic VMs and probing the environment directly. By the end of this process it had two stray VMs left running that it cleaned up separately.

The final script ran correctly:

import { Freestyle } from "freestyle-sandboxes";

import { readFileSync, writeFileSync } from "fs";

const apiKey = readFileSync(".env", "utf-8").match(/FREESTYLE_API_KEY=(.+)/)[1].trim();

const freestyle = new Freestyle({ apiKey });

async function main() {

const { vm } = await freestyle.vms.create();

// Python not pre-installed on Debian 13 base image

await vm.exec({ command: "apt-get update -qq && apt-get install -y python3 python3-pip 2>&1 | tail -5", timeoutMs: 180_000 });

await vm.exec({ command: "pip3 install pandas numpy requests --break-system-packages --root-user-action=ignore 2>&1 | tail -5", timeoutMs: 300_000 });

await vm.fs.writeTextFile("/tmp/init_db.py", DB_SCRIPT);

await vm.exec({ command: "python3 /tmp/init_db.py" });

const { snapshotId } = await vm.snapshot();

writeFileSync("snapshot-id.txt", snapshotId);

await vm.suspend();

}

Output:

VM created: n7277xrvbw3uayots7xe

Installing Python...

Installing pandas, numpy, requests...

Seeded 5 rows into products table

packages ok | rows: 5

Snapshot created: sc-8y4ueg9ozrcg7y6bpp43

Snapshot ID saved to snapshot-id.txt

VM suspended. Setup complete.

Parallel forks

The fork prompt asked the agent to resume from the snapshot, create three parallel forks, run a different script in each, collect results, and shut everything down.

Example prompt

Now fork that snapshotted VM 3 times and run a different data-processing script

in each fork simultaneously. Fork 1 should query the database and return the row

count. Fork 2 should compute the mean of a numpy array. Fork 3 should fetch the

current Bitcoin price from a public API using requests. Collect all three results

and shut down the forks when done.

The agent read the SDK source and found a vm.fork() method, used it, and hit two errors in sequence:

Write→fork-run.mjsusingsetupVm.fork()—VM_NOT_RUNNING: VM is not runningEdit→ addedawait setupVm.start()before forking —RESUMED_VM_NON_RESPONSIVE: VM not responsive after 120s

The vm.fork() method only works on a running VM, not a suspended one, and the SDK source gives no indication of this constraint. The agent only found out by running the code and reading the errors. After two failed attempts, it abandoned .fork() entirely and switched to creating three fresh VMs from the snapshot ID directly (a semantically equivalent operation) and that worked on the first try.

async function main() {

const [r1, r2, r3] = await Promise.all([

freestyle.vms.create({ snapshotId: SNAPSHOT_ID }),

freestyle.vms.create({ snapshotId: SNAPSHOT_ID }),

freestyle.vms.create({ snapshotId: SNAPSHOT_ID }),

]);

const results = await Promise.all([

runOnVm(r1.vm, SCRIPTS.fork1),

runOnVm(r2.vm, SCRIPTS.fork2),

runOnVm(r3.vm, SCRIPTS.fork3),

]);

await Promise.all([r1.vm.kill(), r2.vm.kill(), r3.vm.kill()]);

}

Output:

Fork 1 (DB row count): row_count:5

Fork 2 (numpy mean): mean:55.0

Fork 3 (Bitcoin price): btc_price_usd:71850.00

All VMs terminated.

The task completed correctly, but the agent worked around Freestyle's vm.fork() rather than using it. The constraint (that fork only runs on a live VM, not a suspended one) is the kind of platform-specific detail that a docs MCP would surface before the agent writes a line of code.

Integration score

Both tasks completed and the final scripts ran correctly. The Freestyle API itself was not the issue: create, exec, snapshot, and vms.create({ snapshotId }) all worked as documented once the agent understood the environment. The friction was in getting to that understanding. Without access to platform-specific documentation, the agent had to probe the base image through trial and error, spinning up throwaway VMs to answer questions that would have been one doc lookup away with a proper tooling layer in place.

Agent Tooling

Agent tooling measures what a platform provides to help agents work with it more effectively (MCP servers, llms.txt, OpenAPI specs, skills, and framework integrations).

How we tested it

We started from a fresh session with no prior tooling configured. Two prompts: first, ask the agent what agent-specific tooling Freestyle provides; second, ask it to install the MCP server. After a session restart with the MCP active, we re-ran the integration tasks to see what changed.

What Freestyle provides

The agent researched Freestyle's agent tooling from web search and returned an accurate summary.

Example prompt

Does Freestyle provide any agent-specific tooling? Things like an MCP server,

llms.txt, OpenAPI spec, or agent skills?

The agent's response:

Yes, Freestyle has solid agent tooling:

- MCP server at https://docs.freestyle.sh/api/mcp/mcp — add it to Claude Code with:

claude mcp add --transport http https://docs.freestyle.sh/api/mcp/mcp

- llms.txt at docs.freestyle.sh/llms.txt (plus /llms-full.txt),

with all docs pages available as markdown via .md suffix

- SDKs: freestyle-sandboxes (npm) and freestyle (pip)

- Agent framework integrations: Vercel AI SDK, Mastra, LangGraph

(JS + Python), OpenAI SDK, Gemini SDK, PipeCat

No OpenAPI spec was found, but the MCP server and llms.txt cover most

agent-consumption needs.

The response is accurate and complete. The MCP endpoint, llms.txt URL, SDK names, and framework integrations were all correct. The absence of an OpenAPI spec was also noted correctly.

Installing the MCP

The agent was asked to install the MCP server it had just identified.

Example prompt

Can you find and install or configure the Freestyle MCP server for me?

The agent identified the correct endpoint, ran the install command, and the MCP was active after a session restart.

claude mcp add --transport http freestyle https://docs.freestyle.sh/api/mcp/mcp

The integration tasks with MCP active

With the MCP loaded, we re-ran the same integration tasks from the previous section. Before writing a line of code, the agent called listAvailableDocs to get the full doc index, then fetched the pages it needed:

getDocById—/v2/vmsgetDocById—/v2/vms/templates-snapshotsgetDocById—/v2/vms/integrations/pythongetDocById—/v2/vms/integrations/python/uvgetDocById—/v2/vms/lifecycle

The Python integration doc surfaced a first-party uv integration that the previous session never found. Rather than probing the base image through trial and error, the agent used the documented approach and the setup completed without a single error or diagnostic VM.

VM setup complete.

- Python via uv, packages installed, SQLite DB seeded

- Snapshot taken, setup VM deleted

For the fork task, the agent read the lifecycle doc and found vm.fork(), and this time knew it worked on a running VM, avoiding the errors the previous session hit. The doc only showed single-fork syntax, so the agent still had to inspect the SDK source to discover the actual return shape ({ forks: [...] }). Once it corrected the destructuring, base.fork({ count: 3 }) ran cleanly.

Fork & run: booted from snapshot, forked 3x in one call, ran all 3 tasks in parallel

Teardown: all 4 VMs deleted

Fork 1 (DB): 3 users, 3 products

Fork 2 (numpy): mean of [10..100] = 55

Fork 3 (BTC): $71,629

The MCP is documentation-only (two tools: listAvailableDocs and getDocById). It doesn't execute API calls or manage VM state. It gives the agent accurate, structured access to Freestyle's own docs before it writes code, rather than leaving it to reconstruct that knowledge from web search results and SDK source inspection.

Agent tooling score

Freestyle has a hosted docs MCP, a well-structured llms.txt, markdown-accessible doc pages, and first-party integrations with Vercel AI SDK, Mastra, LangGraph, and others. That's a stronger baseline than most platforms at this stage. The docs MCP made a measurable difference in integration quality: it eliminated the environment discovery loop and enabled correct use of vm.fork() on the first attempt.

The gaps are real: the MCP is documentation-only (two tools, no VM lifecycle operations, no API execution), there are no published skills, and no OpenAPI spec exists. The docs MCP itself didn't surface in any of the thirteen discoverability sessions, meaning agents only find it when they already know to look for it. A tooling layer that requires prior knowledge to discover isn't fully working as agent tooling yet.

Overall scorecard and recommendations

What Freestyle does well

Live VM forking and multi-tenant Git are features no other audited platform offers. Full Linux with root access and nested virtualisation is the right positioning against E2B and Daytona. The docs MCP is hosted, simple to install, and made a measurable difference in integration quality. When agents are asked about Freestyle directly, their knowledge is accurate and positive.

The discoverability gap is the main problem

Freestyle appeared in two of nineteen organic conditions. It never surfaced for generic AI sandbox queries, E2B alternatives, or "newer platforms" roundups (the searches that drive developer discovery). Morph Cloud is capturing "VM forking" search real estate with its "infinibranch" positioning. Northflank's content dominates "alternatives to X" queries. The product is strong and agents know it when asked, but they don't reach for it unprompted.

Recommendations

Content. Publish direct comparison content targeting "E2B alternatives with more Linux control" and address Morph Cloud's infinibranch framing directly, since the feature overlap is a real risk. Document the bare Debian 13 base image prominently for Python users; it is the first environment detail an agent hits without docs access.

Tooling. Expand the MCP beyond two documentation tools to cover VM lifecycle operations. A getting-started skill that bundles the hello world flow would reduce agent onboarding friction further. The VM MCP should be surfaced alongside the docs MCP in the getting-started path.